It has been nearly four months since Google turned the SEO world upside down with the release of the Panda (Farmer) update. Hundreds of thousands of websites have been impacted by this update. While Google claims only a small amount of search queries were impacted by this change (roughly 11.8% according to Google), the effects have dealt some heavy hits to countless webmasters.

One such site I have been asked to work with was hit really hard. The website is aesthetically pleasing, has great domain age, diverse backlinks, no advertisement blocks, and is definitely a site that you would view as a trustworthy source. This site had thousands of pages of content which was great. However, nearly 80% of that content was "licensed" content which essentially meant that they were not the only website that had access to use it. In other words, they had a significant amount of duplicate content!

Pulling Content Data From Google Analytics

Knowing that the duplicate content issue was causing the majority of the negative SEO issues and ultimately knew we would need to remove it, I first wanted to segment the organic traffic the site received to both the duplicate content as well as the original content. This would allow us to see if Google was penalizing only the duplicate content, original content, or both. In order to do this, I went into Google Analytics > Traffic Sources > Search Engines > Google. I then selected "Landing Pages" to be displayed in the first column, then sorted from largest to smallest in the "visits" column. For the last step, I selected the data to show the first 500 rows of data for my export. Once I had the data I wanted to analyze, I then modified the date range to show a month at a time, starting back in December. This would allow me to show a few months worth of history before the Panda update rolled out. As I went through each month, I would export the data for Microsoft Excel. Now this is where the fun starts...Distilled has an excellent write up on using Excel for SEO, which I highly recommend sifting through should you be interested in creating something similar to what I am about to show/explain.

Using Microsoft Excel To Analyze Google's Panda Impact

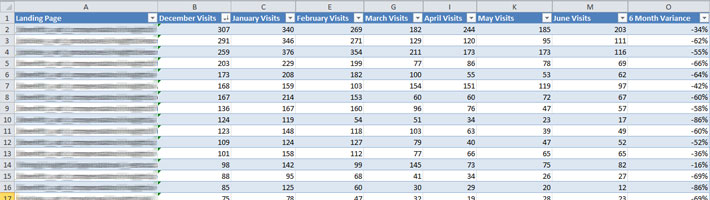

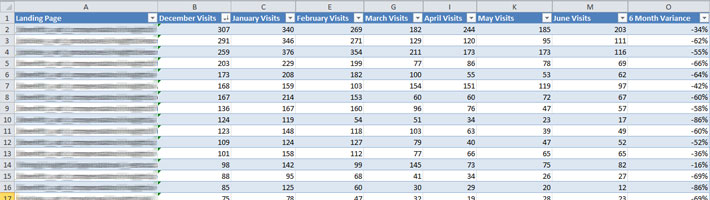

Luckily for me, I was given a list of the content that was original on this site. Armed with this info, I proceeded to create an Excel document that would allow me to segment all the data I just exported from Google Analytics. The approach went like this...I created two tabs in Excel, one for the original content and the second for the duplicate content. Within each of these tabs, I created columns for the landing page, each of the months I had data for, and a variance column that showed the increase/decrease in traffic to that particular piece of content since the first month I had data for. In order to figure out what landing pages were duplicate content, I used excel to combine all landing pages from my Analytics export with the list of original content I was provided. I then removed all duplicates and was left with a list of the duplicate content landing pages. In the landing page column of each tab, I simply copy and pasted the location of the content. I renamed the title of the first column to be called "Landing Page". Please note in order to get this to work with later steps, I needed to remove the "http://www.domain.com" prefix from anything I placed in this column. Now we have the basic foundation in place (aside from the formulas) I will be adding later to pull the data we need to show. Next, I created a new tab for each month of data exported from Google Analytics. I simply opened each of the CSV file's downloaded and copy and paste the data into the correct tab for the month the data represents. Once I pasted the data into a tab, I needed to create a table with the data. To do this, I selected all the data and hit "Ctrl+T" on the keyboard. This will create a table automatically. I needed to take note of the name of the table created as I will need to know this later, or an easier thing to do is to simply rename the table to the month of the data making it easy to remember. Once I had all the data for each month, it was time to pull the data needed to populate the original content tab as well as the duplicate content tab. Going back to the Distilled Excel for SEO tutorial, I focused on using the VLOOKUP function to do most of what I needed. I won't go into all the details here (as I am not an Excel Ninja), but the way it pretty much works is that you can lookup corresponding data from another sheet and import it into a cell on your current tab. In my spreadsheet, the formula I was using in each of the "month" columns was something like this:=IFERROR(VLOOKUP([@[Landing Page]],December,2,0),0)Basically, this formula means that if there is an error with the data, then a "0" would be displayed in the cell. However, the formula would look up the data listed "Column A" which is aptly called "Landing Page" and will look for the same landing page row in the "December" table and import the corresponding data located in column 2, which is column B. Sounds kind of confusing but is actually a beautiful thing to see in action. I then proceeded to copy and modify the formula for each of the months/columns in the duplicate and original content tabs resulting in an aggregation of data relating to only original content as well as duplicate content respectively.

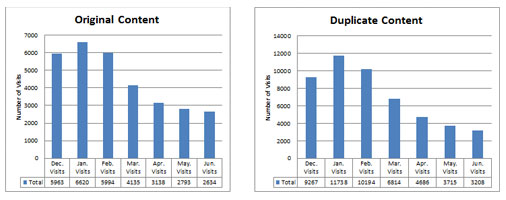

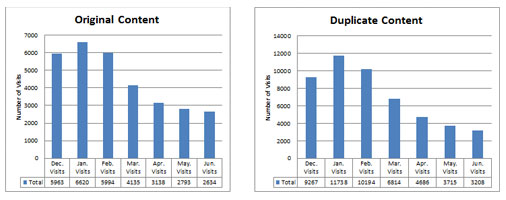

Visualizing The Analytics Data

Now that I had all the data nicely organized, it was time to put it into something that visually displayed the data to key stakeholders. To do this I proceeded to create two pivot tables with the data, converting the data into bar graphs. Creating a visual representation, allowed for easy interpretation of the data we have collected.

Srimoyi September 30, 2018

Awesome analysis! Although I still have a doubt, if someone ever copies my content , will Google recognize that mine is the original content? I hope it will not have any bad impact on my traffic? I use 100% genuine contents those I write myself, and don’t even use any sort of copywrite material.